Supervised#

Shapelet forest vs. Nearest neighbors#

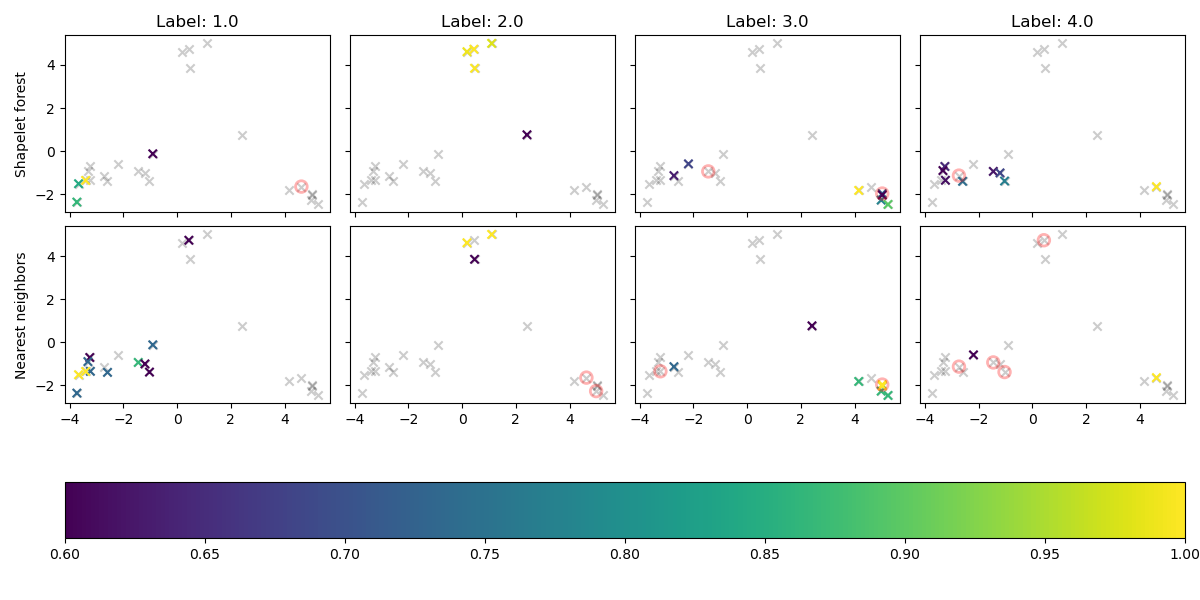

The following example show a comparison of the shapelet forest classifier and the nearest neighbor classifier.

import numpy as np

import matplotlib.pyplot as plt

from sklearn.decomposition import PCA

from sklearn.metrics import accuracy_score

from sklearn.model_selection import train_test_split

from sklearn.neighbors import KNeighborsClassifier

from sklearn.pipeline import make_pipeline

from wildboar.datasets import load_dataset

from wildboar.ensemble import ShapeletForestEmbedding, ShapeletForestClassifier

random_state = 1234

x, y = load_dataset("Car")

x_train, x_test, y_train, y_test = train_test_split(

x, y, test_size=0.2, random_state=random_state

)

f_embedding = make_pipeline(

ShapeletForestEmbedding(sparse_output=False, random_state=random_state),

PCA(n_components=2, random_state=random_state),

)

f_embedding.fit(x_train)

x_embedding = f_embedding.transform(x_test)

classifiers = [

("Shapelet forest", ShapeletForestClassifier(random_state=random_state)),

("Nearest neighbors", KNeighborsClassifier()),

]

classes = np.unique(y)

n_classes = len(classes)

fig, ax = plt.subplots(

nrows=len(classifiers),

ncols=n_classes,

figsize=(3 * n_classes, 6),

sharex=True,

sharey=True,

)

for i, (name, clf) in enumerate(classifiers):

clf.fit(x_train, y_train)

y_pred = clf.predict(x_test)

probas = clf.predict_proba(x_test)

for k in range(n_classes):

if i == 0:

ax[i, k].set_title("Label: %r" % classes[k])

if k == 0:

ax[i, k].set_ylabel(name)

ax[i, k].scatter(

x_embedding[:, 0],

x_embedding[:, 1],

c="black",

alpha=0.2,

marker="x",

)

mappable = ax[i, k].scatter(

x_embedding[y_pred == classes[k], 0],

x_embedding[y_pred == classes[k], 1],

c=probas[y_pred == classes[k], k],

marker="x",

cmap="viridis",

)

ax[i, k].scatter(

x_embedding[(y_test[y_test != y_pred] == classes[k]).nonzero()[0], 0],

x_embedding[(y_test[y_test != y_pred] == classes[k]).nonzero()[0], 1],

edgecolors="red",

linewidths=2,

alpha=0.3,

facecolors="None",

s=70,

marker="o",

)

plt.tight_layout()

fig.colorbar(mappable, ax=ax, orientation="horizontal")

plt.savefig("../fig/classification_sf_vs_nn.png")

Univariate shapelet forest#

The following example compare the AUC of the shapelet forest and the extra shapelet trees classifier

import numpy as np

from sklearn.model_selection import cross_validate, StratifiedKFold

from wildboar.datasets import load_dataset

from wildboar.ensemble import ShapeletForestClassifier, ExtraShapeletTreesClassifier

random_state = 1234

x, y = load_dataset("Beef")

classifiers = {

"Shapelet forest": ShapeletForestClassifier(

n_shapelets=10,

metric="scaled_euclidean",

n_jobs=-1,

random_state=random_state,

),

"Extra Shapelet Trees": ExtraShapeletTreesClassifier(

metric="scaled_euclidean",

n_jobs=-1,

random_state=random_state,

),

}

for name, clf in classifiers.items():

score = cross_validate(clf, x, y, scoring="roc_auc_ovo", n_jobs=1)

print("Classifier: %s" % name)

print(" - fit-time: %.2f" % np.mean(score["fit_time"]))

print(" - test-score: %.2f" % np.mean(score["test_score"]))

Output

Classifier: Shapelet forest

- fit-time: 1.07

- test-score: 0.89

Classifier: Extra Shapelet Trees

- fit-time: 0.21

- test-score: 0.86

Comparing classifiers#

The following example compare the AUC of several classifiers over several datasets

import numpy as np

import pandas as pd

from sklearn.model_selection import cross_validate

from wildboar.datasets import load_dataset, list_datasets

from wildboar.ensemble import ShapeletForestClassifier, ExtraShapeletTreesClassifier

from wildboar.distance.dtw import dtw_distance

from sklearn.neighbors import KNeighborsClassifier

random_state = 1234

classifiers = {

"Nearest neighbors": KNeighborsClassifier(

n_neighbors=1,

metric="euclidean",

),

"Shapelet forest": ShapeletForestClassifier(

n_shapelets=10,

metric="scaled_euclidean",

random_state=random_state,

n_jobs=-1,

),

"Extra shapelet trees": ExtraShapeletTreesClassifier(

metric="scaled_euclidean",

n_jobs=-1,

random_state=random_state,

),

}

repository = "wildboar/ucr-tiny"

datasets = list_datasets(repository)

df = pd.DataFrame(columns=classifiers.keys(), index=datasets, dtype=np.float)

for dataset in datasets:

print(dataset)

x, y = load_dataset(dataset, repository=repository)

for clf_name, clf in classifiers.items():

print(" ", clf_name)

score = cross_validate(clf, x, y, scoring="roc_auc_ovo", n_jobs=1)

df.loc[dataset, clf_name] = np.mean(score["test_score"])

df.to_csv("../tab/classification_cmp.csv", float_format="%.3f")

Nearest neighbors |

Shapelet forest |

Extra shapelet trees |

|

|---|---|---|---|

Beef |

0.762 |

0.891 |

0.858 |

Coffee |

1.000 |

1.000 |

1.000 |

GunPoint |

0.945 |

1.000 |

1.000 |

SyntheticControl |

0.949 |

1.000 |

1.000 |

TwoLeadECG |

0.997 |

1.000 |

1.000 |